Overview

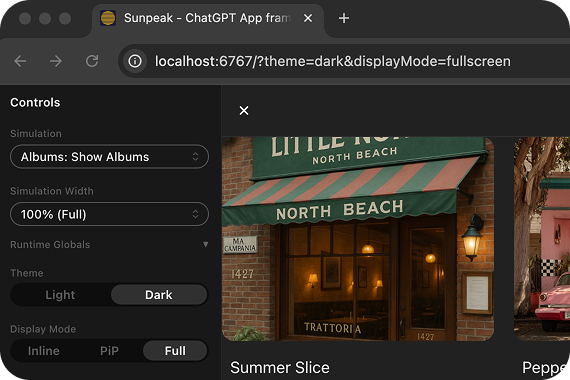

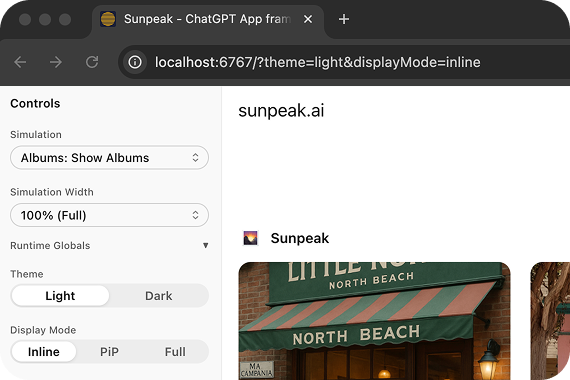

sunpeak provides two levels of automated Playwright testing for MCP Apps:- E2E tests against the inspector — the inspector replicates ChatGPT and Claude runtimes locally, and simulations (JSON fixtures) define reproducible tool states. Playwright loads a simulation in the inspector via URL and asserts against the rendered resource. Test every combination of host, theme, display mode, and device type without deploying or burning API credits.

-

Live tests against real hosts —

sunpeak/testprovides Playwright fixtures that open real ChatGPT (and future hosts), send messages, wait for app iframes, and let you assert against the rendered result. All host DOM interaction (auth, selectors, iframe access) is maintained by sunpeak — you only write resource assertions.

| Command | What it tests | Runtime |

|---|---|---|

pnpm test | Unit tests (Vitest) | jsdom |

pnpm test:e2e | E2E tests against the inspector | Playwright + inspector |

pnpm test:live | Live tests against real ChatGPT | Playwright + real host |

E2E Testing

E2E tests are Playwright specs intests/e2e/*.spec.ts. The dev server starts automatically — Playwright launches it before running tests.

Writing E2E Tests

UsecreateInspectorUrl to load a simulation in the inspector with specific host/theme/display mode settings:

URL Parameters

The

The createInspectorUrl function accepts parameters for configuring host, theme, display mode, device type, safe area insets, and more. See the Inspector API Reference for the complete list.

Testing Backend-Only Tools

If your resource calls backend tools viauseCallServerTool, define mock responses using the serverTools field in the simulation JSON. The inspector resolves these mocks based on the tool call arguments:

serverTools field supports both simple (single result) and conditional (when/result array) forms. See Simulation API Reference for details.

Example E2E Test Structure

A typical e2e test file tests a resource across different modes:Live Testing

Live tests validate your MCP Apps inside real ChatGPT — not the inspector. They open a browser, navigate to ChatGPT, send messages that trigger tool calls against your MCP server, and verify the rendered app using Playwright assertions. This catches issues that inspector tests can’t: real MCP connection behavior, actual LLM tool invocation, host-specific iframe rendering, and production resource loading.Prerequisites

- ChatGPT account with MCP/Apps support

- Tunnel tool — ngrok, Cloudflare Tunnel, or similar

- Browser session — Logged into chatgpt.com in Chrome, Arc, Brave, or Edge

One-Time Setup

- Go to Settings > Apps > Create in ChatGPT

- Set the app name to match your

package.jsonnameexactly. Live tests type/{appName} ...to invoke your app, and ChatGPT matches on this name. - Enter your tunnel URL with the

/mcppath (e.g.,https://abc123.ngrok.io/mcp) - Save the connection

Running Live Tests

- Imports your ChatGPT session from your browser (Chrome, Arc, Brave, or Edge). Falls back to a manual login window if no session is found.

- Starts

sunpeak dev --prod-resourcesautomatically - Refreshes the MCP server connection in ChatGPT settings (once in globalSetup, before all workers)

- Runs

tests/live/*.spec.tsfiles fully in parallel — each test gets its own chat window

Live tests always run with a visible browser window. chatgpt.com uses bot detection that blocks headless browsers.

Writing Live Tests

Importtest and expect from sunpeak/test to get a live fixture that handles auth, message sending, and iframe access automatically:

live fixture provides:

invoke(prompt)— starts a new chat, sends the prompt (with host-specific formatting like/{appName}for ChatGPT), waits for the app iframe, and returns aFrameLocatorstartNewChat()— opens a fresh conversation (for multi-step flows)sendMessage(text)— sends a message with host-appropriate formattingwaitForAppIframe()— waits for the MCP app iframe to render and returns aFrameLocatorsendRawMessage(text)— sends a message without any prefixsetColorScheme(scheme, appFrame?)— switches the host to'light'or'dark'theme; optionally pass an appFrameLocatorto wait for it to updatepage— raw PlaywrightPageobject for advanced assertions

chatgpt). When new hosts are supported, add them with a one-line change:

Troubleshooting

'Not logged into ChatGPT' error

'Not logged into ChatGPT' error

On first run, a browser window opens for you to log in to ChatGPT. The session is saved to

.auth/chatgpt.json but typically only lasts a few hours because Cloudflare’s cf_clearance cookie is HttpOnly and cannot be persisted across runs. When you see this error, just re-authenticate in the browser window that opens. If it keeps failing, delete the .auth/ directory and run pnpm test:live again.Tunnel not reachable

Tunnel not reachable

Verify your tunnel is running and the URL is correct. The test checks the tunnel’s

/health endpoint before proceeding.'ChatGPT DOM may have changed' warning

'ChatGPT DOM may have changed' warning

ChatGPT occasionally updates their UI. sunpeak checks selector health at startup. If selectors are stale, please file an issue.

Tool not called by ChatGPT

Tool not called by ChatGPT

Live tests use specific prompts like “Use the show-albums tool to…” to reliably trigger tool calls. If a tool isn’t called, the test retries once. Persistent failures may indicate the tool isn’t properly connected — check ChatGPT settings.

Dive Deeper

Inspector

The inspector that powers E2E tests.

Simulations API Reference

JSON schema, conventions, and auto-discovery.

Inspector API Reference

createInspectorUrl parameters and Inspector component props.